What is a web crawler?

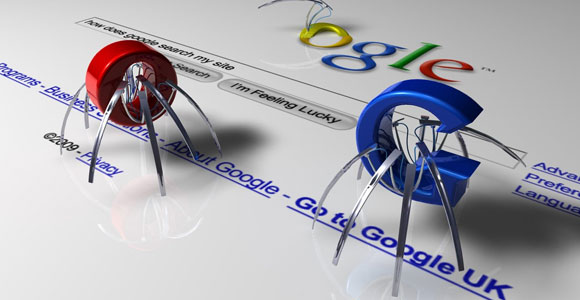

A web crawler, Spider, or web search tool bot downloads and lists content from everywhere the Internet. The objective of such a bot is to realize what (pretty much) every site page on the web is about, so the data can be recovered when it’s required. They’re designated “web crawlers” since creeping is the specialized term for consequently getting to a site and acquiring information through a product program.

These bots are quite often worked via web indexes. By applying an inquiry calculation to the information gathered by web crawlers, web search tools can give pertinent connections because of client search inquiries, producing the rundown of pages that appear after a client types a pursuit into Google or Bing (or another web index).

What is a search ordering?

Search ordering resembles making a library card inventory for the Internet with the goal that a web search knows where on the Internet to recover data when an individual looks for it. It can likewise measure up to the list toward the rear of a book, which records every one of the spots in the book where a specific subject or expression is referenced.

Ordering centers generally around the text that shows up on the page, and on the metadata* about the page that clients don’t see. At the point when most web search list a page, they add every one of the words on the page to the record aside from words like “a,” “an,” and “the” for Google’s situation. At the point when clients look for those words, the web search result goes through its file of the relative multitude of pages where those words show up and chooses the most pertinent ones.

How web crawlers work?

The Internet is continually changing and extending. Since it is absurd to expect to know the number of absolute website pages there are on the Internet, web search result bots start from a seed, or a rundown of known URLs. They creep the website pages at those URLs first. As they creep those website pages, they will discover hyperlinks to different URLs, and they add those to the rundown of pages to slither straightaway.

Given the immense number of website pages on the Internet that could be filed for search, this interaction could go on endlessly. Nonetheless, a web search result will follow certain strategies that make it more particular with regards to which pages to slither, in what request to creep them, and how frequently they should slither them again to check for content updates.

What are Robots?

Search robots, otherwise called bots, drifters, insects, and crawlers, are the instruments many web indexes, like Google , Bing , and Yahoo! , use to construct their data sets. Most robots work like internet browsers, with the exception of they don’t need client cooperation.

Robots access site pages, regularly utilizing connections to find and connection to different locales. They can file titles, outlines, or the whole substance of reports considerably more rapidly and completely than a human could.

While their speed and productivity make them extremely interesting to the chiefs of web indexes, search robots, particularly inadequately built ones, can overpower a few workers. Executives can prohibit or restrict robot access by putting robots.txt records on their workers that diagram how their locales are to be gotten to.

What are Spiders on web?

A Spider is a program that visits Web destinations and peruses their pages and other data to make sections for an internet searcher record. The significant web search tools on the Web all have such a program, which is otherwise called a “Crawler” or a “Spider.” Spiders are commonly modified to visit locales that have been put together by their proprietors as new or refreshed. Whole destinations or explicit pages can be specifically visited and listed.

You must be logged in to post a comment.